文章目录

- 四、文档问答

- 4.1 快速入门

- 4.2 逐步实现

- 4.3 其它方法

- 五、评估

- 5.1 创建QA app

- 5.2 生成测试数据点

- 5.2.1 Hard-coded examples

- 5.2.2 LLM-Generated examples

- 5.3 link chain debug手动评估

- 5.4 LLM assisted evaluation

- 5.5 LangChain Evaluation platform

- 六、Agents(代理)

- 6.1 使用内置的LangChain tools进行代理

- 6.2 使用Python Agent

- 6.3 调试agent chains

- 6.4 自定义代理工具

- 七、总结

- deeplearning官网课程《LangChain for LLM Application Development》(含代码)、 B站中文字幕视频《LLM应用程序开发的LangChain》

- LangChain官网、LangChain官方文档、LangChain 🦜️🔗 中文网、langchain hub

- OpenAI API Key(创建API Key,以及侧边栏Usage选项查看费用)

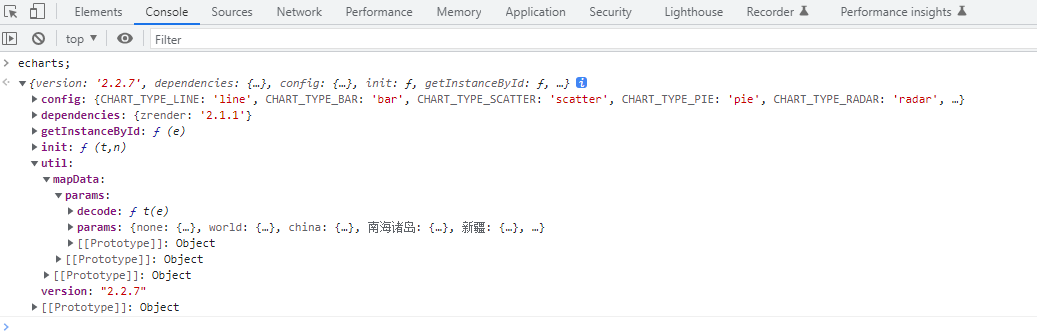

官方原版视频含有代码,可以直接跑。右侧有英文文本,点此安装 immersive-translate插件(沉浸式翻译),可以直接双语对照查看,效果更好。

四、文档问答

4.1 快速入门

给定一段来自PDF/网页/markdown等文档的文本,使用LLM来回答有关这些文本的问题,以便深入了解这些信息,这就是文档问答。这个过程会引入一些LangChain组件,例如embedding模型和向量存储。

#pip install --upgrade langchain

import os

from dotenv import load_dotenv, find_dotenv

_ = load_dotenv(find_dotenv()) # read local .env file

from langchain.chains import RetrievalQA

from langchain.chat_models import ChatOpenAI

from langchain.document_loaders import CSVLoader

from langchain.vectorstores import DocArrayInMemorySearch

from IPython.display import display, Markdown

- RetrievalQA:对文档进行检索

- CSVLoader:CSV文档加载器

- DocArrayInMemorySearch:内存中的向量存储,不需要连接到外部数据库,适合入门

- display, Markdown:jupyter中用于显示信息的工具

下面我们先导入户外服装的CSV数据,然后创建向量存储。

#pip install docarray

file = 'OutdoorClothingCatalog_1000.csv'

loader = CSVLoader(file_path=file)

# 创建向量存储

from langchain.indexes import VectorstoreIndexCreator

index = VectorstoreIndexCreator(

vectorstore_cls=DocArrayInMemorySearch).from_loaders([loader])

创建一个query,然后使用索引查询来获得响应。打印出来之后,我们得到了一个markdown格式显示的表格,里面是所有具有防晒功能的衬衫的名称和描述,最后是一个LLM的简介摘要。

query ="Please list all your shirts with sun protection in a table in markdown and summarize each one."

response = index.query(query)

display(Markdown(response))

| Name | Description |

|---|---|

| Men’s Tropical Plaid Short-Sleeve Shirt | UPF 50+ rated, 100% polyester, wrinkle-resistant, front and back cape venting, two front bellows pockets |

| Men’s Plaid Tropic Shirt, Short-Sleeve | UPF 50+ rated, 52% polyester and 48% nylon, machine washable and dryable, front and back cape venting, two front bellows pockets |

| Men’s TropicVibe Shirt, Short-Sleeve | UPF 50+ rated, 71% Nylon, 29% Polyester, 100% Polyester knit mesh, wrinkle resistant, front and back cape venting, two front bellows pockets |

| Sun Shield Shirt by | UPF 50+ rated, 78% nylon, 22% Lycra Xtra Life fiber, wicks moisture, fits comfortably over swimsuit, abrasion resistant |

所有的衬衫都提供UPF 50+的防晒保护,可以阻挡98%的有害阳光辐射。这些面料抗皱且干燥迅速,全部都配有前后背部通风口和两个前置鼓包口袋(翻译后)。

4.2 逐步实现

LLM一次只能处理上千个tokens,但如果文档的tokens数量远远超过这个数该怎么办呢?这就需要embedding和向量存储了。

文本可以转换为embedding进行表示,相似内容的文本应该具有相似的embedding,这一点可以通过在向量空间中进行比较来判断。

vector database:向量数据库,用于存储文本的向量表示(embedding)。我们可以将大文档分割成各个小块,然后将其embedding表示存储到向量数据库,这就是创建向量索引是发生的操作。有了这个索引,就可以用它来查找与输入相关的文本片段。

当模型输入一个query时,先将其转为embedding,然后将其与向量数据库中所有的向量进行比较,返回最相似的前n个结果。最后将这个结果传递给LLM,就能得到最终的响应。4.1节中我们使用几行代码就可以实现文档问答,下面我们将逐步了解其底层实现。

loader = CSVLoader(file_path=file)

docs = loader.load()

docs[0]

Document(page_content=": 0\nname: Women's Campside Oxfords\ndescription: This ultracomfortable lace-to-toe Oxford boasts a super-soft canvas, thick cushioning, and quality construction for a broken-in feel from the first time you put them on. \n\nSize & Fit: Order regular shoe size. For half sizes not offered, order up to next whole size. \n\nSpecs: Approx. weight: 1 lb.1 oz. per pair. \n\nConstruction: Soft canvas material for a broken-in feel and look. Comfortable EVA innersole with Cleansport NXT® antimicrobial odor control. Vintage hunt, fish and camping motif on innersole. Moderate arch contour of innersole. EVA foam midsole for cushioning and support. Chain-tread-inspired molded rubber outsole with modified chain-tread pattern. Imported. \n\nQuestions? Please contact us for any inquiries.", metadata={'source': 'OutdoorClothingCatalog_1000.csv', 'row': 0})

可以看到,这些文档已经很小了,不需要进一步分割,所以下面直接使用OpenAIEmbeddings创建其embedding表示。我们还可以使用embeddings.embed_query方法,来查看传入一段具体的文本之后会创建什么embedding。

from langchain.embeddings import OpenAIEmbeddings

embeddings = OpenAIEmbeddings()

embed = embeddings.embed_query("Hi my name is Harrison") #

print(len(embed))

print(embed[:5])

1536

[-0.021913960576057434, 0.006774206645786762, -0.018190348520874977, -0.039148248732089996, -0.014089343138039112]

下面我们将所有csv文本数据创建embeddings,并保存到向量数据库中,通过from_documents方法可以实现这一点。之后,我们就可以输入query并查找与其相似的文本。

db = DocArrayInMemorySearch.from_documents(docs, embeddings)

query = "Please suggest a shirt with sunblocking"

docs = db.similarity_search(query)

len(docs)

4

docs[0]

Document(page_content=": 87\nname: Women's Tropical Plaid Shirt\ndescription: Our lightest hot-weather shirt lets you beat the heat with a flattering fit.\n\nSize & Fit\n- Slightly Fitted: Softly shapes the body.\n- Falls at hip.\n\nFabric & Care\n- 52% polyester/ 48% nylon.\n- UPF 50+ rated – the highest rated sun protection possible.\n\nAdditional Features\n- Keeps you cool and comfortable by wicking perspiration away from your skin, then dries in minutes.\n- Smooth buttons are easy on your hands.\n- Wrinkle resistant.\n- Front and back cape venting for ventilation.\n- Low-profile pockets and side shaping offer a more flattering fit.\n- Two front pockets, tool tabs and eyewear loop.\n- Imported.\n\nQuestions?\nContact us for more information.", metadata={'source': 'OutdoorClothingCatalog_1000.csv', 'row': 87})

接下来如何利用向量查询来进行文档问答呢?首先我们需要创建一个检索器,这是一个通用接口,可以接受任何的query输入,并返回指定的文档内容(向量查询是其中一种方法,还有其他方法可以实现)。

由于我们想进行文本生成并返回自然语言响应,所以我们需要导入一个语言模型,然后将文档文本进行合并,这里是将文本中所有页面合并到一个变量qdocs中。接着将qdocs传递到prompt中,然后输入LLM得到响应。

retriever = db.as_retriever()

llm = ChatOpenAI(temperature = 0.0)

qdocs = "".join([docs[i].page_content for i in range(len(docs))])

response = llm.call_as_llm(f"{qdocs} Question: Please list all your shirts with sun protection in a table in markdown and summarize each one.")

display(Markdown(response))

| Shirt Name | Description |

|---|---|

| Women’s Tropical Plaid Shirt | A lightweight, UPF 50+ rated shirt that wicks away perspiration and dries quickly. Features front and back cape venting, low-profile pockets, and side shaping for a flattering fit. |

| Performance Plus Woven Shirt | A breathable summer shirt made with quick-dry fabric that provides UPF 40+ sun protection. Dries in less than 14 minutes and is abrasion-resistant for durability. |

| Tropicview Baseball Cap | A sun-blocking baseball hat with UPF 50+ rated sun protection. Features a rear flap for extra coverage, elastic cord for adjustable fit, and Coolmax sweatband for moisture-wicking. |

| Smooth Comfort Check Shirt, Slightly Fitted | A men’s check shirt with TrueCool® fabric that wicks away moisture and provides wrinkle-free performance. Features a button-down collar and single patch pocket. |

每件衬衫都提供防晒功能,具有从50+到40+不等的UPF等级。它们采用轻薄透气的面料制成,可以吸湿快干。女士的热带格子衬衫和Tropicview棒球帽还具有额外的通风设计,提供更多舒适感。Smooth Comfort格子衬衫专为男士设计,采用TrueCool®面料,具有吸湿快干和防皱性能(翻译后)。

以上这些可以通过chain封装起来,即创建一个检索问题的回应链,来对检索的文档进行问答。

qa_stuff = RetrievalQA.from_chain_type(

llm=llm,

chain_type="stuff",

retriever=retriever,

verbose=True)

stuff是一种简单的链类型,只是单纯地将所有文档都放入上下文中,并对语言模型进行一次调用。retriever是用于获取文档的接口,将文档内容传递给语言模型。

下面我们创建一个query,运行此链式操作。

query = "Please list all your shirts with sun protection in a table in markdown and summarize each one."

response = qa_stuff.run(query)

display(Markdown(response))

> Entering new chain...

> Finished chain.

| Shirt Name | Sun Protection | Fabric | Additional Features |

|---|---|---|---|

| Women’s Tropical Plaid Shirt | UPF 50+ | 52% polyester/ 48% nylon | Wicks perspiration, wrinkle-resistant, front and back cape venting, low-profile pockets, tool tabs, eyewear loop |

| Performance Plus Woven Shirt | UPF 40+ | 100% nylon | Quick-dry fabric, moisture-wicking, abrasion-resistant |

| Tropicview Baseball Cap | UPF 50+ | Body: 71% nylon, 29% polyester. Mesh: 85% polyester, 15% S.Cafe polyester. Sweatband: 100% polyester. | Rear flap, elastic cord, Coolmax sweatband, dark underbrim, mesh side panels |

- Women’s Tropical Plaid Shirt: A lightweight, hot-weather shirt with UPF 50+ sun protection. It wicks perspiration, dries quickly, and has front and back cape venting for ventilation. It also has low-profile pockets, tool tabs, and an eyewear loop.

- Performance Plus Woven Shirt: A breathable summer shirt made of quick-dry fabric that is UPF 40+ rated. It is moisture-wicking and abrasion-resistant, making it perfect for trail or travel.

- Tropicview Baseball Cap: A sun-blocking baseball hat with UPF 50+ rated sun protection. It has a rear flap for extra coverage that can be tucked away when not needed. It also has an elastic cord for infinite adjustment, a Coolmax sweatband, and mesh side panels for ventilation.

以上是详细的步骤,我也依旧可以使用4.1 节的简单代码来实现这一些。我们可以指定embedding或者是不同的vectorstore类型,

index = VectorstoreIndexCreator(

vectorstore_cls=DocArrayInMemorySearch,

embedding=embeddings,

).from_loaders([loader])

response = index.query(query, llm=llm)

display(Markdown(response))

| Name | Description |

|---|---|

| Men’s Tropical Plaid Short-Sleeve Shirt | UPF 50+ rated, 100% polyester, wrinkle-resistant, front and back cape venting, two front bellows pockets |

| Men’s Plaid Tropic Shirt, Short-Sleeve | UPF 50+ rated, 52% polyester and 48% nylon, machine washable and dryable, front and back cape venting, two front bellows pockets |

| Men’s TropicVibe Shirt, Short-Sleeve | UPF 50+ rated, 71% Nylon, 29% Polyester, 100% Polyester knit mesh, wrinkle resistant, front and back cape venting, two front bellows pockets |

| Sun Shield Shirt by | UPF 50+ rated, 78% nylon, 22% Lycra Xtra Life fiber, wicks moisture, fits comfortably over swimsuit, abrasion resistant |

4.3 其它方法

stuff方法是将所有的文档内容都传递给一个prompt,然后输入模型进行响应,这个方法简单而有效。但如果我们想对许多不同类型的文档进行相同类型的问答,该怎么办呢?

接下来介绍几种其它的方法:

Map_reduce:将所有相同类型的文本片段一起传递给模型,得到一个回答。然后调用另一个语言模型对所有不同类型文本的回答进行汇总,得到最终的答案,这样可以并行处理无数的文档。缺点是,Map_reduce将所有的文档都视为独立的,这不一定是最优解。Refine:迭代循环,基于前一个答案的基础上来构建,这对于信息合并以及逐步作答非常有用,一般会得到比较长的答案,缺点是不能并行处理。Map_rerank:为每个文档执行一次LLM调用,对答案进行排序后返回最高分。所以你需要告诉模型,如果文档和输入越相关,得分越高,并做精确的指导说明。Map_rerank也可以并行处理,但是调用相对昂贵。

以上方法,除了问答链之外,也可以用于其它的链条。例如Map_reduce常用于摘要链,

五、评估

当用LLM构建复杂的应用程序时,一个重要的问题是如何评估应用程序的性能。另外,如果要改变一些参数,比如更换LLM模型、向量数据库使用策略、检索通道或者其它参数等等,如何确定效果是变好还是变坏?由此,本节将介绍一些评估工具。

应用程序本质上是不同步骤的链式和序列化组合,所以首先你需要了解每一步的输入和输出。一些工具可以作为可视化监视器或者调优器,还有一种办法是使用LLM本身和chains本身来评估其它语言模型。其它chains和APP。随着许多开发基于prompt,整个LLM开发APP的整个工作流的评估过程正在被重新定义。

- 示例生成:Example generation

- 手动评估(和调试):Manual evaluation (and debugging)

- LLM辅助评估:LLM-assisted evaluation

import os

import openai

os.environ["OPENAI_API_KEY"] = 'your openai_api_key '

openai.api_key = os.environ['OPENAI_API_KEY']

5.1 创建QA app

首先我们用上一节的QA chains作为需要被评估的chains。如果中间出现报错,根据报错信息选择安装docarray或tiktoken。

from langchain.chains import RetrievalQA

from langchain.chat_models import ChatOpenAI

from langchain.document_loaders import CSVLoader

from langchain.indexes import VectorstoreIndexCreator

from langchain.vectorstores import DocArrayInMemorySearch

# 加载数据

file = 'OutdoorClothingCatalog_1000.csv'

loader = CSVLoader(file_path=file,encoding='utf-8')

data = loader.load()

#一行代码创建索引

#!pip install docarray -i http://pypi.douban.com/simple

#!pip install tiktoken -i http://pypi.douban.com/simple

index = VectorstoreIndexCreator(

vectorstore_cls=DocArrayInMemorySearch

).from_loaders([loader])

下面通过制定LLM、chains类型、检索器来创建QA chains

llm = ChatOpenAI(temperature = 0.0)

qa = RetrievalQA.from_chain_type(

llm=llm,

chain_type="stuff",

retriever=index.vectorstore.as_retriever(),

verbose=True,

chain_type_kwargs = {"document_separator": "<<<<>>>>>"}

)

下面我们需要弄清楚要对QA chains进行评估的datapoints是什么。

5.2 生成测试数据点

生成测试数据点(test datapoints)的目的是模拟真实世界中模型将要面对的数据。这些数据点应该具有与实际应用场景相似的特征和分布,以测试模型在实际环境中的表现。生成测试数据点的方法可以有多种,例如从已有数据集中抽样、合成数据或手动创建具有特定特征的数据点。

下面介绍的第一种方法是,根据查看一些数据,然后自己提出示例问题及答案,用于后续评估。

5.2.1 Hard-coded examples

下面我们查看两条数据,然后提出一些简单的问题进行回答。

data[10]

Document(page_content=": 10\nname: Cozy Comfort Pullover Set, Stripe\ndescription: Perfect for lounging, this striped knit set lives up to its name. We used ultrasoft fabric and an easy design that's as comfortable at bedtime as it is when we have to make a quick run out.\n\nSize & Fit\n- Pants are Favorite Fit: Sits lower on the waist.\n- Relaxed Fit: Our most generous fit sits farthest from the body.\n\nFabric & Care\n- In the softest blend of 63% polyester, 35% rayon and 2% spandex.\n\nAdditional Features\n- Relaxed fit top with raglan sleeves and rounded hem.\n- Pull-on pants have a wide elastic waistband and drawstring, side pockets and a modern slim leg.\n\nImported.", metadata={'source': 'OutdoorClothingCatalog_1000.csv', 'row': 10})

data[11]

Document(page_content=': 11\nname: Ultra-Lofty 850 Stretch Down Hooded Jacket\ndescription: This technical stretch down jacket from our DownTek collection is sure to keep you warm and comfortable with its full-stretch construction providing exceptional range of motion. With a slightly fitted style that falls at the hip and best with a midweight layer, this jacket is suitable for light activity up to 20° and moderate activity up to -30°. The soft and durable 100% polyester shell offers complete windproof protection and is insulated with warm, lofty goose down. Other features include welded baffles for a no-stitch construction and excellent stretch, an adjustable hood, an interior media port and mesh stash pocket and a hem drawcord. Machine wash and dry. Imported.', metadata={'source': 'OutdoorClothingCatalog_1000.csv', 'row': 11})

examples = [

{

"query": "Do the Cozy Comfort Pullover Set have side pockets?",

"answer": "Yes"

},

{

"query": "What collection is the Ultra-Lofty 850 Stretch Down Hooded Jacket from?",

"answer": "The DownTek collection"

}

]

5.2.2 LLM-Generated examples

人工标注的缺点就是比较贵,所以我们可以考虑使用LLM自动生成问答示例。在langchain中,我们可以导入QA生成链,它将接受文档输入,然后自动创建问答对。apply_and_parse方法会将输出解析器应用于输出,将原本字符串类型的输出转换为包含query-answer pairs的字典。

from langchain.evaluation.qa import QAGenerateChain

example_gen_chain = QAGenerateChain.from_llm(ChatOpenAI())

new_examples = example_gen_chain.apply_and_parse(

[{"doc": t} for t in data[:5]]

)

new_examples[0]

{'query': "What is the weight of each pair of Women's Campside Oxfords?",

'answer': "The approximate weight of each pair of Women's Campside Oxfords is 1 lb. 1 oz."}

现在让我们检查一下这个问答对所对应的文档输入。

data[0]

Document(page_content=": 0\nname: Women's Campside Oxfords\ndescription: This ultracomfortable lace-to-toe Oxford boasts a super-soft canvas, thick cushioning, and quality construction for a broken-in feel from the first time you put them on. \n\nSize & Fit: Order regular shoe size. For half sizes not offered, order up to next whole size. \n\nSpecs: Approx. weight: 1 lb.1 oz. per pair. \n\nConstruction: Soft canvas material for a broken-in feel and look. Comfortable EVA innersole with Cleansport NXT® antimicrobial odor control. Vintage hunt, fish and camping motif on innersole. Moderate arch contour of innersole. EVA foam midsole for cushioning and support. Chain-tread-inspired molded rubber outsole with modified chain-tread pattern. Imported. \n\nQuestions? Please contact us for any inquiries.", metadata={'source': 'OutdoorClothingCatalog_1000.csv', 'row': 0})

合并以上所有示例:

examples += new_examples

现在我们有了一些示例,接下来该如何评估呢?一个直接的想法就是将其传递给QA chains,看看输出是什么。

下面我们传入一条query并运行,可以看到打印的结果有限。我们输入模型的prompt是什么?它检索到的文档是什么?如果这是一个复杂链条,有很多中间步骤,那么中间结果又是什么?这些都没有显示出来。

qa.run(examples[0]["query"])

[1m> Entering new RetrievalQA chain...[0m

[1m> Finished chain.[0m

'The Cozy Comfort Pullover Set, Stripe does have side pockets.'

5.3 link chain debug手动评估

为了解决以上问题,我们可以使用langchain中的一个小工具——link chain debug。设置langchain.debug = True,再次运行示例,可以看到模型输出了更详细的信息。

模型输入首先进入了检索问答链RetrievalQA,然后进入StuffDocumentsChain。这个链使用stuff方法进入LLMChain,其中有几个不同的输入(question、context)。因此,当模型在进行问答时出错,不一定是LLM本身出错了,也有可能是检索步骤出错了,我们可以仔细查看question和context进行确认。

再深入一层,我们看看输入语言模型ChatOpenAI的内容。这里我们可以看到传入ChatOpenAI的prompt、system message和context。

这之后,生成了提问,LLM根据提问生成了答案“The Cozy Comfort Pullover Set, Stripe does have side pockets”,并逐步冒泡回传到上一层链条,最终到达RetrievalQA chain,输出最终结果。中间我们还可以看到tokens信息和使用的模型信息,这些信息让我们可以跟踪链式调用过程中总令牌数,这和使用成本息息相关。

import langchain

langchain.debug = True

qa.run(examples[0]["query"])

[chain/start] [1:RunTypeEnum.chain:RetrievalQA] Entering Chain run with input:

{

"query": "Do the Cozy Comfort Pullover Set have side pockets?"

}

[chain/start] [1:RunTypeEnum.chain:RetrievalQA > 2:RunTypeEnum.chain:StuffDocumentsChain] Entering Chain run with input:

[inputs]

[chain/start] [1:RunTypeEnum.chain:RetrievalQA > 2:RunTypeEnum.chain:StuffDocumentsChain > 3:RunTypeEnum.chain:LLMChain] Entering Chain run with input:

{

"question": "Do the Cozy Comfort Pullover Set have side pockets?",

"context": ": 10\nname: Cozy Comfort Pullover Set, Stripe\ndescription: Perfect for lounging, this striped knit set lives up to its name. We used ultrasoft fabric and an easy design that's as comfortable at bedtime as it is when we have to make a quick run out.\n\nSize & Fit\n- Pants are Favorite Fit: Sits lower on the waist.\n- Relaxed Fit: Our most generous fit sits farthest from the body.\n\nFabric & Care\n- In the softest blend of 63% polyester, 35% rayon and 2% spandex.\n\nAdditional Features\n- Relaxed fit top with raglan sleeves and rounded hem.\n- Pull-on pants have a wide elastic waistband and drawstring, side pockets and a modern slim leg.\n\nImported.<<<<>>>>>: 73\nname: Cozy Cuddles Knit Pullover Set\ndescription: Perfect for lounging, this knit set lives up to its name. We used ultrasoft fabric and an easy design that's as comfortable at bedtime as it is when we have to make a quick run out. \n\nSize & Fit \nPants are Favorite Fit: Sits lower on the waist. \nRelaxed Fit: Our most generous fit sits farthest from the body. \n\nFabric & Care \nIn the softest blend of 63% polyester, 35% rayon and 2% spandex.\n\nAdditional Features \nRelaxed fit top with raglan sleeves and rounded hem. \nPull-on pants have a wide elastic waistband and drawstring, side pockets and a modern slim leg. \nImported.<<<<>>>>>: 632\nname: Cozy Comfort Fleece Pullover\ndescription: The ultimate sweater fleece – made from superior fabric and offered at an unbeatable price. \n\nSize & Fit\nSlightly Fitted: Softly shapes the body. Falls at hip. \n\nWhy We Love It\nOur customers (and employees) love the rugged construction and heritage-inspired styling of our popular Sweater Fleece Pullover and wear it for absolutely everything. From high-intensity activities to everyday tasks, you'll find yourself reaching for it every time.\n\nFabric & Care\nRugged sweater-knit exterior and soft brushed interior for exceptional warmth and comfort. Made from soft, 100% polyester. Machine wash and dry.\n\nAdditional Features\nFeatures our classic Mount Katahdin logo. Snap placket. Front princess seams create a feminine shape. Kangaroo handwarmer pockets. Cuffs and hem reinforced with jersey binding. Imported.\n\n – Official Supplier to the U.S. Ski Team\nTHEIR WILL TO WIN, WOVEN RIGHT IN. LEARN MORE<<<<>>>>>: 151\nname: Cozy Quilted Sweatshirt\ndescription: Our sweatshirt is an instant classic with its great quilted texture and versatile weight that easily transitions between seasons. With a traditional fit that is relaxed through the chest, sleeve, and waist, this pullover is lightweight enough to be worn most months of the year. The cotton blend fabric is super soft and comfortable, making it the perfect casual layer. To make dressing easy, this sweatshirt also features a snap placket and a heritage-inspired Mt. Katahdin logo patch. For care, machine wash and dry. Imported."

}

[llm/start] [1:RunTypeEnum.chain:RetrievalQA > 2:RunTypeEnum.chain:StuffDocumentsChain > 3:RunTypeEnum.chain:LLMChain > 4:RunTypeEnum.llm:ChatOpenAI] Entering LLM run with input:

{

"prompts": [

"System: Use the following pieces of context to answer the users question. \nIf you don't know the answer, just say that you don't know, don't try to make up an answer.\n----------------\n: 10\nname: Cozy Comfort Pullover Set, Stripe\ndescription: Perfect for lounging, this striped knit set lives up to its name. We used ultrasoft fabric and an easy design that's as comfortable at bedtime as it is when we have to make a quick run out.\n\nSize & Fit\n- Pants are Favorite Fit: Sits lower on the waist.\n- Relaxed Fit: Our most generous fit sits farthest from the body.\n\nFabric & Care\n- In the softest blend of 63% polyester, 35% rayon and 2% spandex.\n\nAdditional Features\n- Relaxed fit top with raglan sleeves and rounded hem.\n- Pull-on pants have a wide elastic waistband and drawstring, side pockets and a modern slim leg.\n\nImported.<<<<>>>>>: 73\nname: Cozy Cuddles Knit Pullover Set\ndescription: Perfect for lounging, this knit set lives up to its name. We used ultrasoft fabric and an easy design that's as comfortable at bedtime as it is when we have to make a quick run out. \n\nSize & Fit \nPants are Favorite Fit: Sits lower on the waist. \nRelaxed Fit: Our most generous fit sits farthest from the body. \n\nFabric & Care \nIn the softest blend of 63% polyester, 35% rayon and 2% spandex.\n\nAdditional Features \nRelaxed fit top with raglan sleeves and rounded hem. \nPull-on pants have a wide elastic waistband and drawstring, side pockets and a modern slim leg. \nImported.<<<<>>>>>: 632\nname: Cozy Comfort Fleece Pullover\ndescription: The ultimate sweater fleece – made from superior fabric and offered at an unbeatable price. \n\nSize & Fit\nSlightly Fitted: Softly shapes the body. Falls at hip. \n\nWhy We Love It\nOur customers (and employees) love the rugged construction and heritage-inspired styling of our popular Sweater Fleece Pullover and wear it for absolutely everything. From high-intensity activities to everyday tasks, you'll find yourself reaching for it every time.\n\nFabric & Care\nRugged sweater-knit exterior and soft brushed interior for exceptional warmth and comfort. Made from soft, 100% polyester. Machine wash and dry.\n\nAdditional Features\nFeatures our classic Mount Katahdin logo. Snap placket. Front princess seams create a feminine shape. Kangaroo handwarmer pockets. Cuffs and hem reinforced with jersey binding. Imported.\n\n – Official Supplier to the U.S. Ski Team\nTHEIR WILL TO WIN, WOVEN RIGHT IN. LEARN MORE<<<<>>>>>: 151\nname: Cozy Quilted Sweatshirt\ndescription: Our sweatshirt is an instant classic with its great quilted texture and versatile weight that easily transitions between seasons. With a traditional fit that is relaxed through the chest, sleeve, and waist, this pullover is lightweight enough to be worn most months of the year. The cotton blend fabric is super soft and comfortable, making it the perfect casual layer. To make dressing easy, this sweatshirt also features a snap placket and a heritage-inspired Mt. Katahdin logo patch. For care, machine wash and dry. Imported.\nHuman: Do the Cozy Comfort Pullover Set have side pockets?"

]

}

[llm/end] [1:RunTypeEnum.chain:RetrievalQA > 2:RunTypeEnum.chain:StuffDocumentsChain > 3:RunTypeEnum.chain:LLMChain > 4:RunTypeEnum.llm:ChatOpenAI] [80.07s] Exiting LLM run with output:

{

"generations": [

[

{

"text": "Yes, the Cozy Comfort Pullover Set has side pockets.",

"generation_info": null,

"message": {

"content": "Yes, the Cozy Comfort Pullover Set has side pockets.",

"additional_kwargs": {},

"example": false

}

}

]

],

"llm_output": {

"token_usage": {

"prompt_tokens": 734,

"completion_tokens": 13,

"total_tokens": 747

},

"model_name": "gpt-3.5-turbo"

},

"run": null

}

[chain/end] [1:RunTypeEnum.chain:RetrievalQA > 2:RunTypeEnum.chain:StuffDocumentsChain > 3:RunTypeEnum.chain:LLMChain] [80.08s] Exiting Chain run with output:

{

"text": "Yes, the Cozy Comfort Pullover Set has side pockets."

}

[chain/end] [1:RunTypeEnum.chain:RetrievalQA > 2:RunTypeEnum.chain:StuffDocumentsChain] [80.08s] Exiting Chain run with output:

{

"output_text": "Yes, the Cozy Comfort Pullover Set has side pockets."

}

[chain/end] [1:RunTypeEnum.chain:RetrievalQA] [80.65s] Exiting Chain run with output:

{

"result": "Yes, the Cozy Comfort Pullover Set has side pockets."

}

'Yes, the Cozy Comfort Pullover Set has side pockets.'

5.4 LLM assisted evaluation

上一节我们调试了链式调用单个示例输入的情况,如果是输入很多示例,该怎么办呢?一种方法是同样进行手动的链式调用评估,看看每个示例输入时发生了什么,以此判断中间步骤是否是正确的,但这样做非常乏味且耗时。

回到我们最喜欢的解决方式,用LLM来做这一切。首先关闭debug模式,免得打印的信息太多,然后预测所有问题的输出。

# Turn off the debug mode

langchain.debug = False

predictions = qa.apply(examples)

> Entering new chain...

> Finished chain.

> Entering new chain...

> Finished chain.

> Entering new chain...

> Finished chain.

> Entering new chain...

> Finished chain.

> Entering new chain...

> Finished chain.

> Entering new chain...

> Finished chain.

> Entering new chain...

> Finished chain.

接着,我们导入QA评估链及其评估函数evaluate,用语言模型来帮助我们进行评估。

from langchain.evaluation.qa import QAEvalChain

llm = ChatOpenAI(temperature=0)

eval_chain = QAEvalChain.from_llm(llm)

graded_outputs = eval_chain.evaluate(examples, predictions)

导入示例和预测结果,打印一系列分级输出。其中,Question和Real Answer是LLM生成的,Predicted Answer也由语言模型生成,Predicted Grade由语言模型评估得到。

for i, eg in enumerate(examples):

print(f"Example {i}:")

print("Question: " + predictions[i]['query'])

print("Real Answer: " + predictions[i]['answer'])

print("Predicted Answer: " + predictions[i]['result'])

print("Predicted Grade: " + graded_outputs[i]['text'])

print()

Example 0:

Question: Do the Cozy Comfort Pullover Set have side pockets?

Real Answer: Yes

Predicted Answer: The Cozy Comfort Pullover Set, Stripe does have side pockets.

Predicted Grade: CORRECT

Example 1:

Question: What collection is the Ultra-Lofty 850 Stretch Down Hooded Jacket from?

Real Answer: The DownTek collection

Predicted Answer: The Ultra-Lofty 850 Stretch Down Hooded Jacket is from the DownTek collection.

Predicted Grade: CORRECT

Example 2:

Question: What is the weight of each pair of Women's Campside Oxfords?

Real Answer: The approximate weight of each pair of Women's Campside Oxfords is 1 lb. 1 oz.

Predicted Answer: The weight of each pair of Women's Campside Oxfords is approximately 1 lb. 1 oz.

Predicted Grade: CORRECT

Example 3:

Question: What are the dimensions of the small and medium Recycled Waterhog Dog Mat?

Real Answer: The dimensions of the small Recycled Waterhog Dog Mat are 18" x 28" and the dimensions of the medium Recycled Waterhog Dog Mat are 22.5" x 34.5".

Predicted Answer: The small Recycled Waterhog Dog Mat has dimensions of 18" x 28" and the medium size has dimensions of 22.5" x 34.5".

Predicted Grade: CORRECT

Example 4:

Question: What are some features of the Infant and Toddler Girls' Coastal Chill Swimsuit?

Real Answer: The swimsuit features bright colors, ruffles, and exclusive whimsical prints. It is made of four-way-stretch and chlorine-resistant fabric, ensuring that it keeps its shape and resists snags. The swimsuit is also UPF 50+ rated, providing the highest rated sun protection possible by blocking 98% of the sun's harmful rays. The crossover no-slip straps and fully lined bottom ensure a secure fit and maximum coverage. Finally, it can be machine washed and line dried for best results.

Predicted Answer: The Infant and Toddler Girls' Coastal Chill Swimsuit is a two-piece swimsuit with bright colors, ruffles, and exclusive whimsical prints. It is made of four-way-stretch and chlorine-resistant fabric that keeps its shape and resists snags. The swimsuit has UPF 50+ rated fabric that provides the highest rated sun protection possible, blocking 98% of the sun's harmful rays. The crossover no-slip straps and fully lined bottom ensure a secure fit and maximum coverage. It is machine washable and should be line dried for best results.

Predicted Grade: CORRECT

Example 5:

Question: What is the fabric composition of the Refresh Swimwear V-Neck Tankini Contrasts?

Real Answer: The body of the Refresh Swimwear V-Neck Tankini Contrasts is made of 82% recycled nylon and 18% Lycra® spandex, while the lining is made of 90% recycled nylon and 10% Lycra® spandex.

Predicted Answer: The Refresh Swimwear V-Neck Tankini Contrasts is made of 82% recycled nylon with 18% Lycra® spandex for the body and 90% recycled nylon with 10% Lycra® spandex for the lining.

Predicted Grade: CORRECT

Example 6:

Question: What is the fabric composition of the EcoFlex 3L Storm Pants?

Real Answer: The EcoFlex 3L Storm Pants are made of 100% nylon, exclusive of trim.

Predicted Answer: The fabric composition of the EcoFlex 3L Storm Pants is 100% nylon, exclusive of trim.

Predicted Grade: CORRECT

5.5 LangChain Evaluation platform

auto-evaluator

最后介绍link chain评估平台,可以完成上面实现的所有评估步骤,并在用户界面中持久化展示,打印的结果也更加的美观。下面是一个名为DeepLearningai的会话,我们可以看到每一步中输入输出是什么,可以点击某条链查看更具体的信息。一直点到底,可以看到输入LLM的system message、context和answer。 点击右上按钮还可以导入数据。这种方式可以在后台持续运行,并逐步添加示例进行评估。

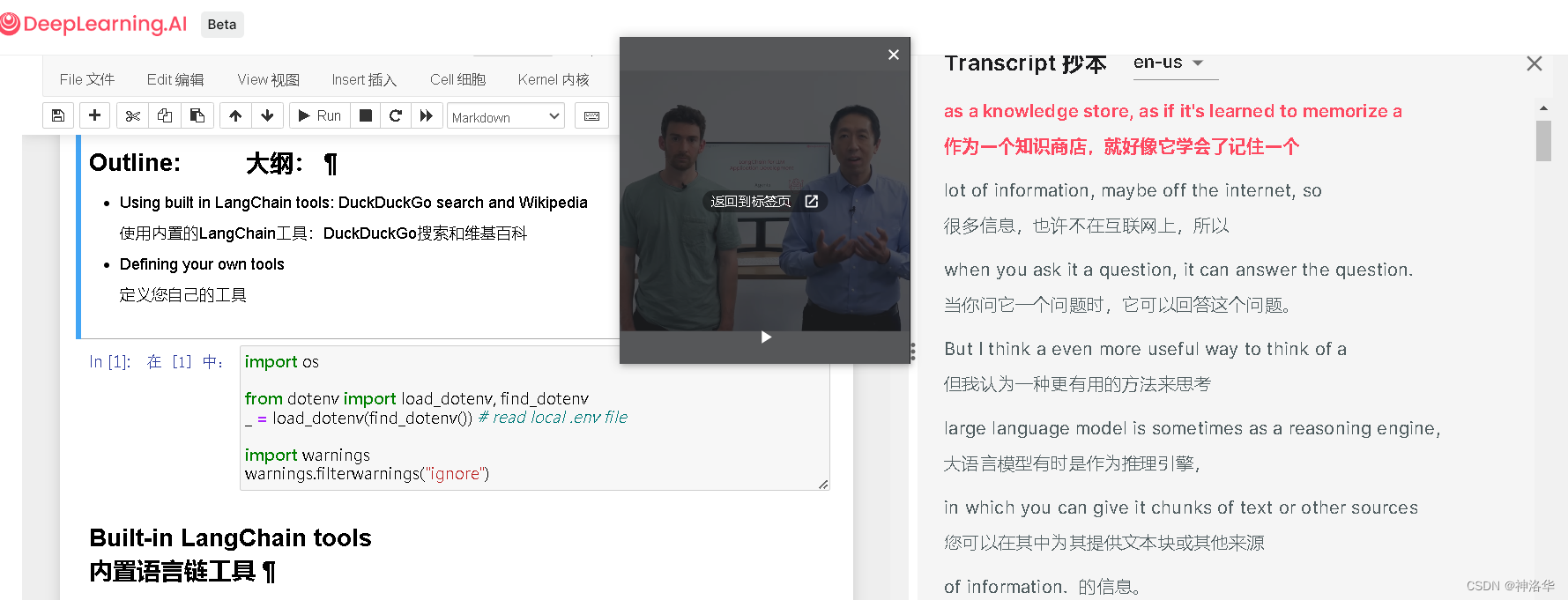

六、Agents(代理)

比起知识库,LLMs更应该被看作是一个推理引擎。你可以给它提供文本片段或其他信息来源,LLMs就可以使用从互联网上学到的新的背景知识,帮助你回答问题、推理内容或者甚至决定下一步该做什么,这就是LangChain的Agents(代理)框架所帮助你实现的。(感觉就是chatgpt的插件系统)

下面将了解代理是什么、如何创建和使用代理,以及如何为其配备不同类型的工具(如LangChain内置的搜索引擎);以及如何创建自己的工具,使代理能够与任何数据存储、任何API或任何你想要的函数进行交互。

- Using built in LangChain tools: DuckDuckGo search and Wikipedia

- Defining your own tools

import os

from dotenv import load_dotenv, find_dotenv

_ = load_dotenv(find_dotenv()) # read local .env file

import warnings

warnings.filterwarnings("ignore")

6.1 使用内置的LangChain tools进行代理

导入相关库,使用ChatOpenAI语言模型作为Agents的推理引擎,连接到其它的数据源和计算资源。

#!pip install -U wikipedia

from langchain.agents.agent_toolkits import create_python_agent

from langchain.agents import load_tools, initialize_agent

from langchain.agents import AgentType

from langchain.tools.python.tool import PythonREPLTool

from langchain.python import PythonREPL

from langchain.chat_models import ChatOpenAI

llm = ChatOpenAI(temperature=0)

加载LLM数学工具和维基百科工具。llm-math实际上是一个链,使用语言模型和计算器来解决数学问题。wikipedia可以对维基百科进行搜索和查询。然后使用tools、llm、agent来初始化agent。

“AgentType”将用于指定我们要使用的代理类型,“CHAT_ZERO_SHOT_REACT_DESCRIPTION”中的chat是针对聊天模型进行优化的,React是一种提策略,旨在使语言模型推理性能最优化。

在第一节课我们讨论了输出解析器,以及如何将LLM的输出(字符串)解析为我们可以在下游使用的特定格式。这里我们设置handle_parsing_errors=True,表示碰到输出解析错误时,将错误格式的文本传回语言模型,进行更正,这一点很重要。

tools = load_tools(["llm-math","wikipedia"], llm=llm)

agent= initialize_agent(

tools,

llm,

agent=AgentType.CHAT_ZERO_SHOT_REACT_DESCRIPTION,

handle_parsing_errors=True,

verbose = True)

agent("What is the 25% of 300?")

根据打印的输出,可以看到整个模型处理的过程:

- Thought

- Action:一个json块,包含两个字段

- action:要使用的工具是计算器,后者是。

- action input:传入工具的输入

- Observation:观察。计算器的答案是75(蓝色)

- Thought:回到语言模型,计算器返回的答案是75

- Final Answer: 25% of 300 is 75.0

下面是一个维基百科的示例,我们想了解一下Tom M. Mitchell,模型意识到它应该调用维基百科来进行回答。

question = "Tom M. Mitchell is an American computer scientist \

and the Founders University Professor at Carnegie Mellon University (CMU)\

what book did he write?"

result = agent(question)

> Entering new AgentExecutor chain...

Thought: I should use Wikipedia to find the answer to this question.

Action:

```

{

"action": "Wikipedia",

"action_input": "Tom M. Mitchell"

}

```

Observation: Page: Tom M. Mitchell

Summary: Tom Michael Mitchell (born August 9, 1951) is an American computer scientist and the Founders University Professor at Carnegie Mellon University (CMU). He is a founder and former Chair of the Machine Learning Department at CMU. Mitchell is known for his contributions to the advancement of machine learning, artificial intelligence, and cognitive neuroscience and is the author of the textbook Machine Learning. He is a member of the United States National Academy of Engineering since 2010. He is also a Fellow of the American Academy of Arts and Sciences, the American Association for the Advancement of Science and a Fellow and past President of the Association for the Advancement of Artificial Intelligence. In October 2018, Mitchell was appointed as the Interim Dean of the School of Computer Science at Carnegie Mellon.

Page: Tom Mitchell (Australian footballer)

Summary: Thomas Mitchell (born 31 May 1993) is a professional Australian rules footballer playing for the Collingwood Football Club in the Australian Football League (AFL). He previously played for the Sydney Swans from 2012 to 2016, and the Hawthorn Football Club between 2017 and 2022. Mitchell won the Brownlow Medal as the league's best and fairest player in 2018 and set the record for the most disposals in a VFL/AFL match, accruing 54 in a game against Collingwood during that season.

Thought:The book that Tom M. Mitchell wrote is "Machine Learning".

Action:

```

{

"action": "Wikipedia",

"action_input": "Machine Learning (book)"

}

```

Observation: Page: Machine learning

Summary: Machine learning (ML) is a branch of artificial intelligence that leverages data to improve computer performance by giving machines the ability to "learn".Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.A subset of machine learning is closely related to computational statistics, which focuses on making predictions using computers, but not all machine learning is statistical learning. The study of mathematical optimization delivers methods, theory and application domains to the field of machine learning. Data mining is a related field of study, focusing on exploratory data analysis through unsupervised learning.Some implementations of machine learning use data and artificial neural networks in a way that mimics the working of a biological brain.In its application across business problems, machine learning is also referred to as predictive analytics.

Page: Quantum machine learning

Summary: Quantum machine learning is the integration of quantum algorithms within machine learning programs. The most common use of the term refers to machine learning algorithms for the analysis of classical data executed on a quantum computer, i.e. quantum-enhanced machine learning. While machine learning algorithms are used to compute immense quantities of data, quantum machine learning utilizes qubits and quantum operations or specialized quantum systems to improve computational speed and data storage done by algorithms in a program. This includes hybrid methods that involve both classical and quantum processing, where computationally difficult subroutines are outsourced to a quantum device. These routines can be more complex in nature and executed faster on a quantum computer. Furthermore, quantum algorithms can be used to analyze quantum states instead of classical data. Beyond quantum computing, the term "quantum machine learning" is also associated with classical machine learning methods applied to data generated from quantum experiments (i.e. machine learning of quantum systems), such as learning the phase transitions of a quantum system or creating new quantum experiments. Quantum machine learning also extends to a branch of research that explores methodological and structural similarities between certain physical systems and learning systems, in particular neural networks. For example, some mathematical and numerical techniques from quantum physics are applicable to classical deep learning and vice versa. Furthermore, researchers investigate more abstract notions of learning theory with respect to quantum information, sometimes referred to as "quantum learning theory".

Page: Timeline of machine learning

Summary: This page is a timeline of machine learning. Major discoveries, achievements, milestones and other major events in machine learning are included.

Thought:Tom M. Mitchell wrote the book "Machine Learning".

Final Answer: "Machine Learning".

> Finished chain.

模型调用了Wikipedia,其观察结果是黄色(langchain使用不同颜色来表示不同工具的观察结果)。结果显示有两个Tom M. Mitchell,分别是计算机学家和足球运动员。问题所需要的书名包含在第一位Tom M. Mitchell的摘要介绍中。接下来模型尝试了解Machine Learning (book)这本书的更多信息,然后模型意识到正确答案就是Machine Learning ,于是将其返回。

6.2 使用Python Agent

使用Python Agent实现类似copilot的功能,这里使用的工具是PythonREPLTool。REPL是一种与代码进行交互的方式,可以看作是jupyter。

agent = create_python_agent(

llm,

tool=PythonREPLTool(),

verbose=True)

customer_list = [["Harrison", "Chase"],

["Lang", "Chain"],

["Dolly", "Too"],

["Elle", "Elem"],

["Geoff","Fusion"],

["Trance","Former"],

["Jen","Ayai"]

]

agent.run(f"""Sort these customers by last name and then first name and print the output: {customer_list}""")

模型意识到可以使用sorted函数来解决这个问题,然后调用Python REPL来解决。

> Entering new AgentExecutor chain...

I can use the sorted() function to sort the list of customers by last name and then first name. I will need to provide a key function to sorted() that returns a tuple of the last name and first name in that order.

Action: Python REPL

Action Input:

```

customers = [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]

sorted_customers = sorted(customers, key=lambda x: (x[1], x[0]))

for customer in sorted_customers:

print(customer)

```

Observation: ['Jen', 'Ayai']

['Lang', 'Chain']

['Harrison', 'Chase']

['Elle', 'Elem']

['Trance', 'Former']

['Geoff', 'Fusion']

['Dolly', 'Too']

Thought:The customers are now sorted by last name and then first name.

Final Answer: [['Jen', 'Ayai'], ['Lang', 'Chain'], ['Harrison', 'Chase'], ['Elle', 'Elem'], ['Trance', 'Former'], ['Geoff', 'Fusion'], ['Dolly', 'Too']]

> Finished chain.

"[['Jen', 'Ayai'], ['Lang', 'Chain'], ['Harrison', 'Chase'], ['Elle', 'Elem'], ['Trance', 'Former'], ['Geoff', 'Fusion'], ['Dolly', 'Too']]"

6.3 调试agent chains

下面设置langchain.debug=True打印所有级别,来深入了解一下模型运行的过程。有时,agent的调用有点奇怪,这种调式模式会很有用。

import langchain

langchain.debug=True

agent.run(f"""Sort these customers by \

last name and then first name \

and print the output: {customer_list}""")

langchain.debug=False

[chain/start] [1:chain:AgentExecutor] Entering Chain run with input: # 这一步是顶级代理执行器

{

"input": "Sort these customers by last name and then first name and print the output: [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]"

}

[chain/start] [1:chain:AgentExecutor > 2:chain:LLMChain] Entering Chain run with input: # 这一级是LLMchain

{

"input": "Sort these customers by last name and then first name and print the output: [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]",

"agent_scratchpad": "",

"stop": [

"\nObservation:",

"\n\tObservation:"

]

}

[llm/start] [1:chain:AgentExecutor > 2:chain:LLMChain > 3:llm:ChatOpenAI] Entering LLM run with input: # 对LLM的真正调用,输入包括格式化的prompt,包含可以访问的工具和如何格式化输出的说明。

{

"prompts": [

"Human: You are an agent designed to write and execute python code to answer questions.\nYou have access to a python REPL, which you can use to execute python code.\nIf you get an error, debug your code and try again.\nOnly use the output of your code to answer the question. \nYou might know the answer without running any code, but you should still run the code to get the answer.\nIf it does not seem like you can write code to answer the question, just return \"I don't know\" as the answer.\n\n\nPython REPL: A Python shell. Use this to execute python commands. Input should be a valid python command. If you want to see the output of a value, you should print it out with `print(...)`.\n\nUse the following format:\n\nQuestion: the input question you must answer\nThought: you should always think about what to do\nAction: the action to take, should be one of [Python REPL]\nAction Input: the input to the action\nObservation: the result of the action\n... (this Thought/Action/Action Input/Observation can repeat N times)\nThought: I now know the final answer\nFinal Answer: the final answer to the original input question\n\nBegin!\n\nQuestion: Sort these customers by last name and then first name and print the output: [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]\nThought:"

]

}

[llm/end] [1:chain:AgentExecutor > 2:chain:LLMChain > 3:llm:ChatOpenAI] [5.34s] Exiting LLM run with output:

{

"generations": [

[

{

"text": "I can use the `sorted()` function to sort the list of customers. I will need to provide a key function that specifies the sorting order based on last name and then first name.\nAction: Python REPL\nAction Input: \n```python\ncustomers = [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]\nsorted_customers = sorted(customers, key=lambda x: (x[1], x[0]))\nsorted_customers\n```",

"generation_info": null,

"message": {

"content": "I can use the `sorted()` function to sort the list of customers. I will need to provide a key function that specifies the sorting order based on last name and then first name.\nAction: Python REPL\nAction Input: \n```python\ncustomers = [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]\nsorted_customers = sorted(customers, key=lambda x: (x[1], x[0]))\nsorted_customers\n```",

"additional_kwargs": {},

"example": false

}

}

]

],

"llm_output": {

"token_usage": {

"prompt_tokens": 326,

"completion_tokens": 129,

"total_tokens": 455

},

"model_name": "gpt-3.5-turbo"

}

}

[chain/end] [1:chain:AgentExecutor > 2:chain:LLMChain] [5.34s] Exiting Chain run with output:

{

"text": "I can use the `sorted()` function to sort the list of customers. I will need to provide a key function that specifies the sorting order based on last name and then first name.\nAction: Python REPL\nAction Input: \n```python\ncustomers = [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]\nsorted_customers = sorted(customers, key=lambda x: (x[1], x[0]))\nsorted_customers\n```"

}

[tool/start] [1:chain:AgentExecutor > 4:tool:Python REPL] Entering Tool run with input:

"```python

customers = [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]

sorted_customers = sorted(customers, key=lambda x: (x[1], x[0]))

sorted_customers

```"

[tool/end] [1:chain:AgentExecutor > 4:tool:Python REPL] [0.383ms] Exiting Tool run with output:

""

[chain/start] [1:chain:AgentExecutor > 5:chain:LLMChain] Entering Chain run with input:

{

"input": "Sort these customers by last name and then first name and print the output: [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]",

"agent_scratchpad": "I can use the `sorted()` function to sort the list of customers. I will need to provide a key function that specifies the sorting order based on last name and then first name.\nAction: Python REPL\nAction Input: \n```python\ncustomers = [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]\nsorted_customers = sorted(customers, key=lambda x: (x[1], x[0]))\nsorted_customers\n```\nObservation: \nThought:",

"stop": [

"\nObservation:",

"\n\tObservation:"

]

}

[llm/start] [1:chain:AgentExecutor > 5:chain:LLMChain > 6:llm:ChatOpenAI] Entering LLM run with input:

{

"prompts": [

"Human: You are an agent designed to write and execute python code to answer questions.\nYou have access to a python REPL, which you can use to execute python code.\nIf you get an error, debug your code and try again.\nOnly use the output of your code to answer the question. \nYou might know the answer without running any code, but you should still run the code to get the answer.\nIf it does not seem like you can write code to answer the question, just return \"I don't know\" as the answer.\n\n\nPython REPL: A Python shell. Use this to execute python commands. Input should be a valid python command. If you want to see the output of a value, you should print it out with `print(...)`.\n\nUse the following format:\n\nQuestion: the input question you must answer\nThought: you should always think about what to do\nAction: the action to take, should be one of [Python REPL]\nAction Input: the input to the action\nObservation: the result of the action\n... (this Thought/Action/Action Input/Observation can repeat N times)\nThought: I now know the final answer\nFinal Answer: the final answer to the original input question\n\nBegin!\n\nQuestion: Sort these customers by last name and then first name and print the output: [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]\nThought:I can use the `sorted()` function to sort the list of customers. I will need to provide a key function that specifies the sorting order based on last name and then first name.\nAction: Python REPL\nAction Input: \n```python\ncustomers = [['Harrison', 'Chase'], ['Lang', 'Chain'], ['Dolly', 'Too'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Jen', 'Ayai']]\nsorted_customers = sorted(customers, key=lambda x: (x[1], x[0]))\nsorted_customers\n```\nObservation: \nThought:"

]

}

[llm/end] [1:chain:AgentExecutor > 5:chain:LLMChain > 6:llm:ChatOpenAI] [2.41s] Exiting LLM run with output:

{

"generations": [

[

{

"text": "The customers have been sorted by last name and then first name.\nFinal Answer: [['Jen', 'Ayai'], ['Harrison', 'Chase'], ['Lang', 'Chain'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Dolly', 'Too']]",

"generation_info": null,

"message": {

"content": "The customers have been sorted by last name and then first name.\nFinal Answer: [['Jen', 'Ayai'], ['Harrison', 'Chase'], ['Lang', 'Chain'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Dolly', 'Too']]",

"additional_kwargs": {},

"example": false

}

}

]

],

"llm_output": {

"token_usage": {

"prompt_tokens": 460,

"completion_tokens": 67,

"total_tokens": 527

},

"model_name": "gpt-3.5-turbo"

}

}

[chain/end] [1:chain:AgentExecutor > 5:chain:LLMChain] [2.41s] Exiting Chain run with output:

{

"text": "The customers have been sorted by last name and then first name.\nFinal Answer: [['Jen', 'Ayai'], ['Harrison', 'Chase'], ['Lang', 'Chain'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Dolly', 'Too']]"

}

[chain/end] [1:chain:AgentExecutor] [7.75s] Exiting Chain run with output:

{

"output": "[['Jen', 'Ayai'], ['Harrison', 'Chase'], ['Lang', 'Chain'], ['Elle', 'Elem'], ['Geoff', 'Fusion'], ['Trance', 'Former'], ['Dolly', 'Too']]"

}

6.4 自定义代理工具

Anget的一大特点是可以连接自己的信息源,自己的API和数据库。下面介绍如何创建一个自定义工具,连接到你自己的数据源。下面创建一个工具,用来获取当前日期。

#!pip install DateTime

导入tool修饰符,它可应用于任何函数,并将其转换为chain link可以调用的工具。

from langchain.agents import tool

from datetime import date

下面定义time函数,接受任何文本字符串作为输入,但实际我们不会使用它,而是调用date来返回今天的日期。

在time函数的说明中,我们编写了非常详细的文档字符串,用于让angent明白它该何时以及如何调用这个工具。如果我们对输入有更严格的要求,例如有一个函数应该始终接受搜索查询或SQL语句,那么必须在这里进行说明。

@tool

def time(text: str) -> str:

"""Returns todays date, use this for any questions related to knowing todays date. \

The input should always be an empty string, and this function will always return todays date - any \

date mathmatics should occur outside this function."""

return str(date.today())

现在我们将创建另一个代理。这次我们将时间工具添加到现有工具列表中。最后,让我们调用代理并问一下今天的日期是什么。

agent= initialize_agent(

tools + [time],

llm,

agent=AgentType.CHAT_ZERO_SHOT_REACT_DESCRIPTION,

handle_parsing_errors=True,

verbose = True)

Note:

The agent will sometimes come to the wrong conclusion (agents are a work in progress!).

If it does, please try running it again.

try:

result = agent("whats the date today?")

except:

print("exception on external access")

> Entering new AgentExecutor chain...

Thought: I need to use the `time` tool to get today's date.

Action:

```

{

"action": "time",

"action_input": ""

}

```

Observation: 2023-06-27

Thought:I have successfully retrieved today's date using the `time` tool.

Final Answer: Today's date is 2023-06-27.

> Finished chain.

可以看到,模型知道他要调用time工具,且action_input为空字符串,最后返回了今天的日期。

这就是关于代理的课程。这是LangChain中较新、更令人兴奋和更具实验性的部分之一。所以我希望你喜欢使用它。希望它向你展示了如何将语言模型作为推理引擎,执行不同的操作,并连接其他功能和数据源。

七、总结

在这个简短的课程中,你看到了一系列的应用,包括处理客户评论,构建一个可以回答文档问题的应用,甚至使用LLM决定何时调用外部工具(如网络搜索)来回答复杂问题。在这个简短的课程中看到了,只需相当合理的几行代码,你就可以使用LangChain相当高效地构建所有这些应用。

你可以用语言模型做很多其他应用。这些模型之所以强大,是因为它们适用于如此广泛的任务,无论是回答有关CSV文件的问题、查询SQL数据库还是与API进行交互。

在LangChain中,有许多不同的例子可以使用链式结构、提示和输出解析器的组合,以及更多的链式结构来完成所有这些任务。其中大部分要归功于LangChain社区。如果你还没有这样做,我希望你打开你的笔记本电脑或台式电脑,运行pip install LangChain,然后使用这个工具去构建一些令人惊奇的应用。